This summer, three of the major Hollywood unions are negotiating new contracts. The WGA went on strike last month. SAG-AFTRA is currently in negotiations, and speculations are they will join in solidarity with WGA when their current contract expires June 30 (although negotiations may extend past this date). On Friday, June 23rd, the DGA voted (by 87%) to ratify a three-year contract with the studios.

One of the key issues in contention with all three major guilds is the use of AI technologies and how they affect the relative aspects of the film industry. It’s more clear how AI affects writers and actors. For the former, it can be used to complement or even completely supplement aspects of the writing process. For actors, the advancements in AI to create lookalikes and soundalikes are both fascinating and frightening.

What’s less clear is how AI impacts members of the Director’s Guild.

According to The Hollywood Reporter, the contract specifies “that generative artificial intelligence (Gen AI) is not a person and that work performed by DGA members must be assigned to a person. Moreover, “Employers may not use Gen AI in connection with creative elements without consultation with the Director or other DGA-covered employees,” and top entertainment companies and the union must meet twice annually to “discuss and negotiate over AI.” There’s a lot in that little paragraph to unpack. So let’s dig in.

What is “generative” AI?

You’ve heard of ChatGPT. The “G” stands for generative, “generative pre-trained transformer.” Chat refers to the way you interact with it. It can generate original content based on your requests. DALL-E (As in the artist Salvador Dali and the Pixar character Wall-E) generates original art. McKinsey has a great rundown on the basics of AI. And Runway is focused on using generative AI technologies for storytelling. So there’s a lot of concern about the loss of jobs in the creative industries when you have technologies that are designed to emulate the original output of people. Everyone would like technology to make their jobs easier, but we’re wary of its ability to threaten our ability to “generate” an income.

Different kinds of AI

If you ask ChatGPT, it will tell you that there are at least eight commonly recognized forms of AI. Narrow, General, Superintelligent, Machine, Deep, Reinforcement Learning, Natural Language Processing and Computer Vision.

Narrow AI

Apple’s Siri and Amazon’s Alexa fall into this category. They focus on a specific task, and their intelligence allows them to excel at that one thing. These systems can be used for recommendation engines but don’t possess a “general intelligence. Narrow, or weak AI, can provide plenty of value in post-production. It can do things like automatically match the color of two shots or duck the volume of the music. If NAB 2023 was any indication, post-production pros will see new Narrow AI-powered tools coming on everyday.

General AI

Also known as AGI (for artificial general intelligence), is a theoretical AI that is the opposite of Narrow AI. It would be smart across many domains. It could have many skills. Wired reports, “Microsoft Research, with help from OpenAI, released a paper on GPT-4 that claims the algorithm is a nascent example of artificial general intelligence (AGI).” In theory, AGI would have the ability to think on a human level. And the problem with that is how do we align it with our own interests.

Superintelligent AI

Also known as ASI, this is again a theoretical AI that surpasses human intelligence rather than just matching it. This Artificial Super Intelligence would have thinking skills of its own. We don’t know if this is possible to create, and if it is possible, we don’t know if we can control it.

Machine Learning (ML)

Machine learning comes in various forms, including supervised, unsupervised or semi-supervised learning. A machine is trained on a data set, and it may or may not really understand the “right” answer. But machines can begin to spot patterns that might not be apparent to us. Eventually, the system can learn to spot patterns, like identifying a dog in a picture. Generative AI uses machine learning algorithms. It is the foundation for the technologies that we are seeing today.

Deep Learning

This is a subset of Machine Learning. Google’s new generative AI search results say, “Deep Learning structures algorithms in layers to create an “artificial neural network” that can learn and make intelligent decisions on its own.” Statements like this might send a chill down your spine. Those “decisions” have more to do with recognizing and identifying patterns than Terminator-style decisions about who lives and who dies. But how the Deep Learning machine comes to its conclusions can be a bit of a mystery. We know that Deep Learning requires a large set of data. So It is easy to see how a platform like Netflix can use Deep Learning. By analyzing the viewing habits of its members, it can use that analysis to feed a recommendation engine.

Reinforcement Learning

When you put an AI into an unpredictable environment, how does it make decisions? That’s what Reinforcement Learning teaches the machine. It makes decisions and observes the consequences. If rewarded or punished, it learns to do more or less of that. This kind of AI will make a huge impact on online marketing. Multiple iterations of ads will proliferate, and AI will be able to adapt, deploy and adapt again based on whatever is most profitable.

Natural Language Processing (NLP)

This aspect of AI speaks to its ability to understand and output language like a person. By understanding linguistics, the computer learns how to sound like us. This capability is great for things like spell check, transcription and translation. This tech is already taking post-production by storm.

But this capability feels like an existential threat rather than a helping hand for those who make their living writing. This explains why the WGA has focused on the need to refrain from supplanting writers with machines. Writers can use AI to assist their process, but they don’t want the studios to replace them with machines.

Computer Vision

“If AI enables computers to think, Computer Vision enables them to see, observe and understand,” says IBM. Netflix is using Computer Vision to create match-cut tools. This technology provides the input necessary for a computer to “watch” a film. Then Deep Learning begins to understand patterns in those films. VFX tech like rotoscoping and relighting are already being simplified with Computer Vision.

Notice that “generative” is not on that list. This omission is because generative AI combines these different algorithms. The “pre-trained transformer” part of ChatGPT refers to its ability to use statistics to establish relationships between words in a sentence (or a larger body of script) based on an enormous pile of texts written by people. It is trying to copy the way people write by studying their writing. You can see how Netflix uses Machine Learning and Computer Vision in their video about how it is transforming the entire industry from four years ago.

The controversy around “generative” AI in the DGA contract

The specificity of “generative” AI has caused concern among those who feel it is too specific because there are various kinds of AI. Others argue that it is sufficient. The argument is that if AMPTP is using such precise language for “generative AI,” that may open the door to other AI derivative technology that goes by a different name. Others have argued that there are provisions in the contract to prevent that kind of loophole from being exploited. Ultimately, the lawyers will have to battle that out.

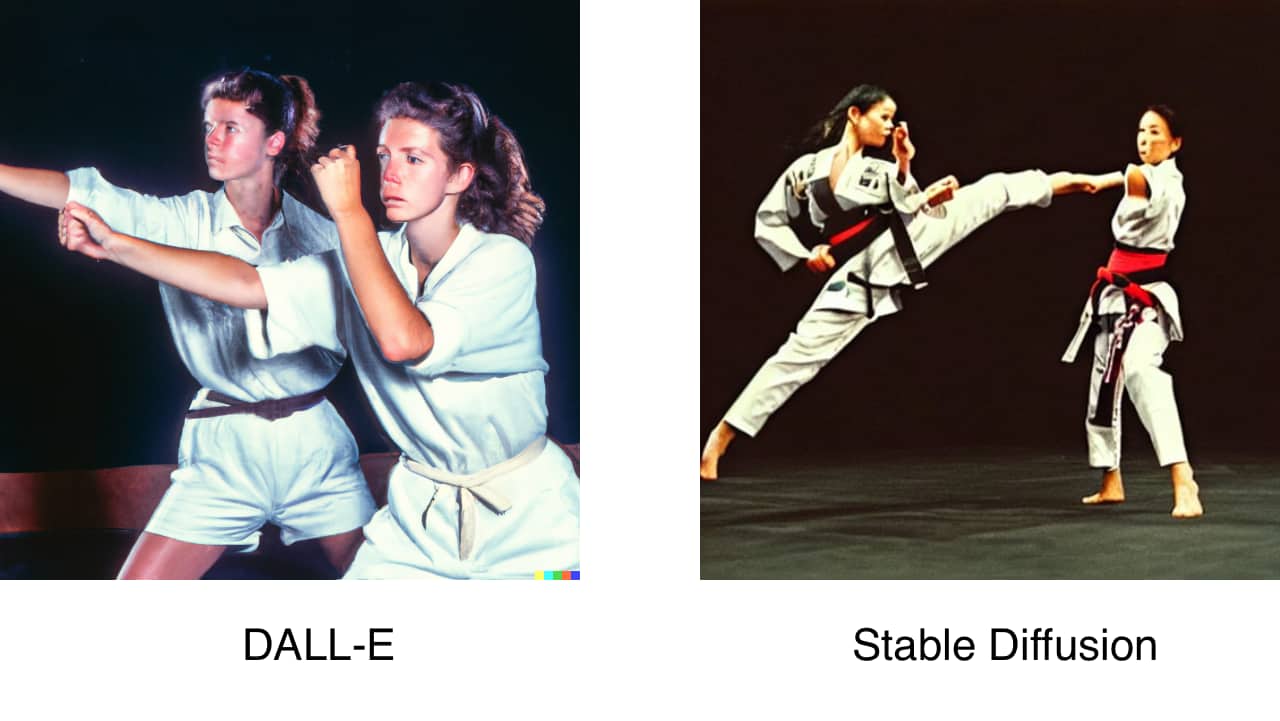

In the meantime, generative AI keeps improving. The internet is full of examples of images created by AI. But what about the aspects of lighting, historical era, costumes, focal lengths, capture medium and lens choices? Here are three examples of prompts of DALL-E and Stable Diffusion that incorporated that kind of language:

- Two men playing chess photo at golden hour in New York City with a bounce fill used on a 19mm leica lens at maximum aperture shot on Kodak Kodachrome film.

- A portrait of a woman on a beach with a bounce fill used shot on a Leica 50mm lens at f.95

- Two women martial arts fighters shot with a telephoto lens from the 1990s.

“Without consultation”

The examples above feel simultaneously laughable and threatening. If AI can reference the characteristics of specific lenses and lighting techniques to generate images, you better believe it will be deployed in all corners of post-production. This is why the agreement stipulates that AI can’t be used “without consultation” with the director. Of course, the concern is that this consultation may not be in good faith. Will directors simply be “notified” (rather than genuinely consulted) when AI is brought in to change or generate imagery? The role of video collaboration/review and approval tools will become exponentially more significant to enable directors to navigate these waters.

Impact on assistant directors and UPMs

The DGA agreement focuses on AI “in connection with creative elements.” This qualifier raised concerns regarding the “non-creative” aspects of the director’s department. Script breakdowns, scheduling and more are a part of the responsibilities of assistant directors and unit production managers. The concern was that much of the work of these “below-the-line” positions may be automated by AI.

Impact on other union negotiations

Forbes points out that it may be easier for the DGA to get concessions regarding the use of AI for their jobs as opposed to other unions. SAG-AFTRA and the WGA will be watching closely because they may feel they are more vulnerable to having their work replaced by AI than directors. The WGA desires assurances that they won’t be replaced by a chat interface. SAG-AFTRA has the concerns of actors, stunt coordinators and background actors to represent. The members of SAG-AFTRA have already authorized a strike if necessary.

Conclusion

The impact of AI will be felt deeply across the entire industry. It will change both the size and shape of the industry as a whole. New roles will be created, and current ones will have their responsibilities reshaped. At the same time, it is imperative that we recognize that it is the human resonance in a work of art that makes it unique. It’s the capturing of creative energy that makes it valuable, and a machine can’t replace that.

MediaSilo

allows for easy management of your media files, seamless collaboration for critical feedback, and out-of-the-box synchronization with your timeline for efficient changes. See how MediaSilo is powering modern post-production workflows with a 14-day free trial.